Added: http://qr.ae/E6fuI is probably what you should read instead. Maybe I’ll merge the two someday, and add some comments about diagonalising an operator.

The eigenvectors of a matrix summarise what it does.

- Think about a large, not-sparse matrix. A lot of computations are implied in that block of numbers. Some of those computations might overlap each other–2 steps forward, 1 step back, 3 steps left, 4 steps right … that kind of thing, but in 400 dimensions. The eigenvectors aim at the end result of it all.** **

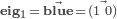

The eigenvectors point in the same direction before & after a linear transformation is applied. (& they are the only vectors that do so)

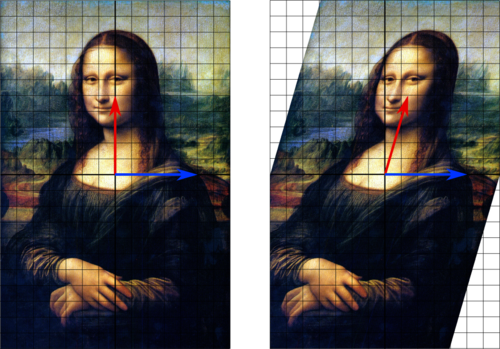

For example, consider a shear

repeatedly applied to ℝ².

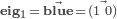

In the above,

In the above,  and

and . (The red arrow is not an eigenvector because it shifted over.)

The eigenvalues say how their eigenvectors scale during the transformation, and if they turn around.

If λᵢ = 1.3 then |eig****ᵢ| grows by 30%. If λᵢ = −2

then

then  doubles in length and points backwards. If λᵢ = 1 then |eig****ᵢ| stays the same. And so on. Above, λ₁ = 1 since

doubles in length and points backwards. If λᵢ = 1 then |eig****ᵢ| stays the same. And so on. Above, λ₁ = 1 since  stayed the same length.

stayed the same length.It’s nice to add that

and

.

For a long time I wrongly thought an eigenvector was, like, its own thing. But it’s not. Eigenvectors are a way of talking about a (linear) transform / operator. So eigenvectors are always the eigenvectors of some transform. Not their own thing.

Put another way: eigenvectors and eigenvalues are a short, universally comparable way of summarising a square matrix. Looking at just the eigenvalues (the spectrum) tells you more relevant detail about the matrix, faster, than trying to understand the entire block-of-numbers and how the parts of the block interrelate. Looking at the eigenvectors tells you where repeated applications of the transform will “leak” (if they leak at all).

To recap: eigenvectors are unaffected by the matrix transform; they simplify the matrix transform; and the λ’s tell you how much the |eig|’s change under the transform.

Now a payoff.

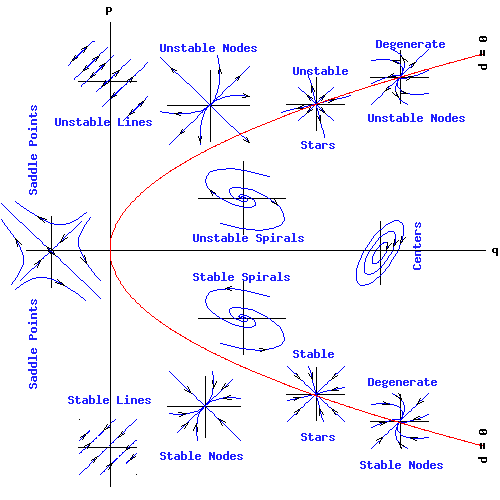

Dynamical Systems make sense now.

If repeated applications of a matrix = a dynamical system, then the eigenvalues explain the system’s long-term behaviour.

I.e., they tell you whether and how the system stabilises, or … doesn’t stabilise.

Dynamical systems model interrelated systems like ecosystems, human relationships, or weather. They also unravel mutual causation.

What else can I do with eigenvectors?

Eigenvectors can help you understand:

- helicopter stability

- quantum particles (the Von Neumann formalism)

- guided missiles

- PageRank 1 2

- the fibonacci sequence

- your Facebook friend network

- eigenfaces

- lots of academic crap

- graph theory

- mathematical models of love

- electrical circuits

- JPEG compression 1 2

- markov processes

- operators & spectra

- weather

- fluid dynamics

- systems of ODE’s … well, they’re just continuous-time dynamical systems

- principal components analysis in statistics

- for example principal components (eigenvalues after varimax rotation of the correlation matrix) were used to try to identify the dimensions of brand personality

Plus, maybe you will have a cool idea or see something in your life differently if you understand eigenvectors intuitively.